Apple M5 Max vs NVIDIA: Can Apple Dethrone CUDA?

The M5 Max promises ~70 TFLOPS FP16 through dedicated Neural Accelerators and 128 GB unified memory at 614 GB/s. We analyze the architecture, benchmark Apple's claims, and compare head-to-head with NVIDIA for AI inference.

For fifteen years, “serious AI compute” meant one thing: NVIDIA. CUDA dominated training, inference, and every step in between. If your workload touched a tensor, you reached for a card with a green logo.

The M5 Max could change that calculus. Not with marketing language, but with what appears to be dedicated matrix-multiplication hardware inside the GPU for the first time in Apple Silicon history. Based on Apple’s published specifications, the 40-core M5 Max should deliver approximately ~70 TFLOPS FP16 through 1,024 fused multiply-accumulate operations per core per cycle. It pairs that with 128 GB of unified memory at 614 GB/s, all of it GPU-addressable, all of it available to AI workloads without a single memory copy.

This isn’t a press release recap, and it isn’t a product review, either. The M5 Max isn’t widely available yet, and independent benchmarks are scarce. What follows is a technical analysis of what Apple says it built, what the architecture specs suggest is possible, where the design could break down, and why we think this is Apple’s strongest bid yet to challenge NVIDIA in edge AI. If you work with large language models, VFX pipelines, or AI inference at the edge, this is the analysis that will help you decide whether the M5 Max deserves a spot on your evaluation list.

TL;DR — Key Takeaways

The M5 Max is Apple’s first chip with dedicated matrix-multiplication hardware inside the GPU, promising ~70 TFLOPS FP16, a potential 4× architectural leap over the M4 Max’s 18.4 TFLOPS through shared ALUs. Its 128 GB unified memory could eliminate the VRAM cliff that cripples NVIDIA cards on 70B+ models. If Apple’s specs hold up under real-world testing, this would make it the strongest single-device platform for large-model local inference. NVIDIA still dominates raw compute (5–28× more TFLOPS), small-model speed, and training at scale. Apple appears to be making its most serious play yet for edge AI and local inference, not datacenter training.

The Fusion Architecture: Apple Goes Chiplet

What Is Fusion Architecture?

The Fusion Architecture is Apple’s first multi-die chiplet design for Pro and Max chips. It bonds two separate 3nm dies into a single package with fully shared unified memory, replacing the monolithic single-die approach used in every prior M-series Pro and Max chip.

Every M-series Pro and Max chip from M1 through M4 was a monolithic system-on-chip, one piece of silicon carrying everything. The M5 Pro and M5 Max break that pattern. Apple now bonds two separate 3nm dies (TSMC N3P) into a single package using TSMC’s SoIC-mH 2.5D packaging: solder-free hybrid bonding with direct copper-to-copper connections between dies.

This is conceptually similar to AMD’s chiplet strategy in Ryzen/EPYC, but with a critical distinction: Apple preserves the unified memory architecture across the die boundary. Both dies share a single pool of memory with zero copies, zero explicit data transfers, and zero bandwidth penalty for cross-die access. From the perspective of macOS and every application running on it, the chip should behave identically to a single-die design, though independent verification of the cross-die latency claims is still pending.

What each die contributes:

The duplication is what enables the scaling: an 18-core CPU and up to 40 GPU cores, roughly double what a single 3nm die could accommodate at acceptable manufacturing yields.

Thermal and Yield Wins

The chiplet separation should solve two constraints that limited M4 Pro/Max:

Reports suggest the M5 Pro and M5 Max may share a unified base silicon design, with the Max activating additional GPU chiplets or using a different chiplet configuration. Apple hasn’t confirmed this, but it would be consistent with the yield economics of chiplet packaging.

The CPU: A Three-Tier Hierarchy That Eliminates Efficiency Cores

What Changed in the M5 CPU?

The M5 introduces a three-tier CPU core hierarchy (Super cores, Performance cores, and Efficiency cores), but the M5 Pro and M5 Max eliminate Efficiency cores entirely, running 6 Super cores and 12 Performance cores for a total of 18 high-performance cores.

| Core Type | Clock Speed | Decode Width | Role |

|---|---|---|---|

| Super Core | Up to 4.61 GHz | 10-wide | Highest single-threaded performance — Apple claims “world’s fastest CPU core” |

| Performance Core | Up to 4.38 GHz | 7-wide | Power-efficient multithreaded pro workloads |

| Efficiency Core | ~3.0 GHz | N/A | Eliminated in M5 Pro/Max, exists only in base M5 |

The M5 Pro and M5 Max run 6 Super cores + 12 Performance cores with zero Efficiency cores. This is a deliberate architectural decision, not a binning artifact. For comparison, the M4 Pro had 14 cores (10P + 4E) and the M4 Max had 16 cores (12P + 4E).

Why the Performance Core Matters

The new Performance cores are not renamed Efficiency cores. They are an entirely new design:

This is fundamentally different from Efficiency cores, which were designed to barely register on power telemetry while handling background tasks. The new Performance cores give the chip a more granular thermal profile for demanding sustained workloads.

CPU Performance Benchmarks

| Metric | M5 Pro/Max | vs. M4 Pro/Max | vs. M1 Pro/Max |

|---|---|---|---|

| Single-core (Geekbench 6.5) | ~4,260 | ~10% faster | ~2× faster |

| Multi-core (Geekbench 6.5) | ~29,200 (Max) | Up to 30% faster | Up to 2.5× faster |

| Total CPU cores | 18 | +4 cores (vs M4 Pro 14) | +8 cores (vs M1 Pro 10) |

The Super core’s 10-wide instruction decode front end is the widest in any consumer CPU. It delivers roughly 10% IPC improvement over M4 at equivalent clocks. Combined with the clock speed increase to 4.61 GHz, single-threaded performance jumps ~15%, a meaningful gain in a segment where annual improvements are typically 3–5%.

The GPU: Apple’s Tensor Core Moment?

What Are M5 Neural Accelerators?

The M5 Neural Accelerators are what Apple describes as dedicated matrix-multiplication hardware units embedded in every GPU core, what would be Apple’s first purpose-built AI silicon inside the GPU. Each Neural Accelerator is specified to perform 1,024 FP16 fused multiply-accumulate operations per cycle, and the 40-core M5 Max would deliver approximately ~70 TFLOPS FP16 in aggregate if those specs hold.

Here’s the fact that reframes the entire M5 story: from M1 through M4, Apple’s GPU had no dedicated matrix-multiplication hardware. All matrix math ran through 128 regular ALUs per GPU core, using the same FP32 pipeline shared with graphics rendering. The simdgroup_matrix Metal instructions improved utilization, but the underlying hardware was generic. The M4 Max peaked at 18.4 TFLOPS FP32 through these shared ALUs.

The M5 appears to change this at the silicon level.

To put this in perspective:

| Generation | AI Compute Path | Peak Throughput | Dedicated Matrix HW? |

|---|---|---|---|

| M1–M4 Max | 128 ALUs/core, shared FP32 pipeline | 18.4 TFLOPS FP32 | No |

| M5 Max | 1,024 FP16 FMAs/core, dedicated Neural Accelerator | ~70 TFLOPS FP16 | Yes |

If these specifications translate to real-world performance, this isn’t an incremental improvement. It’s a category change, architecturally equivalent to when NVIDIA introduced Tensor Cores in the Volta generation. Apple would have dedicated matrix hardware inside the GPU for the first time. For ML engineers, this is the M5’s defining claim, and the one most in need of independent validation.

How Does the M5 Distribute AI Work?

The M5 is designed to run AI workloads across three heterogeneous compute blocks, each optimized for a different class of operation:

1. GPU Neural Accelerators (40 on M5 Max)Dedicated matrix-multiply hardware in every GPU core, accessed via Metal 4’s TensorOps API and Metal Performance Primitives. Purpose-built for transformer inference, the workload class that dominates modern AI. These are designed to run in parallel with the graphics pipeline, so inference shouldn’t compete with rendering.

2. Neural Engine (16-core, 3rd generation)A graph execution engine that compiles and runs entire neural network graphs atomically, architecturally closer to an FPGA than a GPU. Its native compute primitive is convolution, not matrix multiplication. This design dates back to the CNN era (A11, 2017). Transformer workloads, dominated by matmul, must be expressed as 1×1 convolutions to achieve peak ANE throughput, a workaround that Apple’s own ml-ane-transformers reference implementation uses. For a detailed technical breakdown of the ANE’s actual hardware execution model, see this reverse-engineering analysis of the M4 ANE.

One important caveat: the Neural Engine cannot be programmed directly. There is no public ISA, no kernel SDK. All Neural Engine workloads route through CoreML, and custom model layers fall back to the GPU. This is why MLX, llama.cpp, and Ollama all execute on the GPU rather than the Neural Engine. The GPU Neural Accelerators in M5 would effectively solve this by bringing dedicated matrix hardware into the programmable GPU pipeline.

3. CPU AMX (Super + Performance cores)Matrix co-processors on each CPU core for lower-latency tensor operations and workloads that benefit from CPU cache locality.

CoreML acts as the orchestrator, routing operations to whichever compute unit handles them best. This three-pronged architecture is why Apple can claim “4× AI compute”: it’s the aggregate of all three pathways, not a single unit doing 4× the work.

How Does M5 Max Compare to NVIDIA in Raw Compute?

Let’s be direct about where Apple would stand against NVIDIA if the specs hold:

| Platform | FP16 AI Compute | Memory | Bandwidth | Price Context |

|---|---|---|---|---|

| M4 Max | 18.4 TFLOPS FP32 (shared ALUs) | 128 GB unified | 546 GB/s | No dedicated matrix HW |

| M5 Max | ~70 TFLOPS FP16 | 128 GB unified | 614 GB/s | Dedicated Neural Accelerators |

| RTX PRO 6000 | ~500 TFLOPS FP16 (tensor cores) | 96 GB GDDR7 ECC | 1,792 GB/s | Enterprise, ~$10,000 |

| RTX 5090 | ~380 TFLOPS FP16 (tensor cores) | 32 GB GDDR7 | 1,792 GB/s | Desktop, ~$2,000 |

| H100 SXM | 1,979 TFLOPS FP16 (tensor cores) | 80 GB HBM3 | 3,350 GB/s | Datacenter, ~$30K+ |

| DGX Spark | 1 petaFLOP FP4 | 128 GB unified | 273 GB/s | Desktop, $4,699 |

Apple would still lose on raw TFLOPS. Badly. A $2,000 RTX 5090 delivers over 5× the FP16 throughput, and a $10,000 RTX PRO 6000 delivers roughly 7×. An H100 delivers 28×.

But raw compute isn’t the whole story. And that’s where Apple’s architectural bet gets interesting.

The VRAM Cliff: Could 70B Models Run Faster on Apple?

What Is the VRAM Cliff?

The VRAM cliff is the performance collapse that occurs when an AI model exceeds NVIDIA GPU memory capacity. Overflow data offloads to system RAM over PCIe at ~32 GB/s, roughly a 30× bandwidth drop from GPU memory speeds, cratering inference performance. Apple’s unified memory architecture is designed to eliminate this cliff entirely.

This is the single most important theoretical argument for Apple Silicon in large-model inference, and most coverage undersells it dramatically.

A 70-billion-parameter model quantized to 4-bit precision requires approximately 42 GB of memory. The RTX 4090 has 24 GB of VRAM. The RTX 5090 has 32 GB. Neither fits the model.

On Apple Silicon, there should be no VRAM cliff. All 128 GB of unified memory is GPU-addressable at full bandwidth. A tensor loaded for GPU inference is immediately available to the Neural Engine or CPU without explicit memory copies: zero cudaMemcpy, zero PCIe bottleneck, zero bus contention. The entire memory pool runs through a single coherent address space via MTLStorageModeShared.

Will 70B Models Actually Run Faster on M5 Max Than RTX 4090?

Based on the architecture and M4 Max precedent, there’s strong reason to believe so. The argument comes down to memory architecture, not raw compute power:

| Model | Platform | Inference Speed | Why |

|---|---|---|---|

| 70B Q4 (~42 GB) | RTX 4090 (24 GB VRAM) | ~10 tok/s | PCIe offload kills bandwidth |

| 70B Q4 (~42 GB) | M4 Max (128 GB, 546 GB/s) | ~28 tok/s | Full model at GPU bandwidth |

| 70B Q4 (~42 GB) | M5 Max (128 GB, 614 GB/s) | ~30-35 tok/s (est.) | 12% more bandwidth + Neural Accelerators |

The M4 Max already demonstrated this pattern: a laptop chip running a 70-billion-parameter model roughly 3× faster than a $1,600 desktop GPU, because the model fits in memory and the bandwidth is there. If the M5 Max’s specifications hold, the gap should widen slightly thanks to the 12% bandwidth increase and dedicated Neural Accelerators for the prefill phase.

For models that do fit in NVIDIA VRAM (7B, 13B), NVIDIA would still win handily: 100–150 tok/s on an RTX 4090 versus an estimated 40–50 tok/s on M5 Max. The M5 Max isn’t expected to be faster than NVIDIA at everything. The bet is that it’s faster at the specific workloads that matter for large-model inference, and that’s where the industry is heading.

Zero-Copy Unified Memory in Practice

Unified memory isn’t just a capacity story. All three AI compute units (CPU, GPU Neural Accelerators, and Neural Engine) share a single physical memory pool with zero-copy data transfer via IOSurface. A tensor loaded for GPU inference is immediately available to the Neural Engine or CPU AMX without explicit memory copies. This eliminates the host-to-device transfer overhead that is a constant friction point in NVIDIA CUDA workflows.

The M5 Max also includes a 48 MB system-level cache shared across the entire SoC: CPU, GPU, and Neural Engine. This cache sits between L2 and DRAM, keeping frequently accessed tensor data close to all three compute units. NVIDIA’s architecture has no equivalent to this system-wide shared cache layer. It partially compensates for the bandwidth gap between Apple’s LPDDR5X and NVIDIA’s HBM.

TTFT vs Token Generation: The Nuance That Matters

What Is the Difference Between TTFT and Token Generation?

LLM inference has two distinct performance phases. Time-to-first-token (TTFT), also called prefill, processes the entire input prompt and is compute-bound, and the M5’s Neural Accelerators should drive a significant speedup here. Token generation produces each subsequent token and is memory-bandwidth-bound, so improvement would be proportional to the ~12% bandwidth increase from M4 to M5.

Apple claims 4× faster LLM prompt processing on M5 versus M4. Early benchmarks from Apple’s own MLX team support this claim, but only for the prefill phase.

Apple’s MLX benchmarks using the Qwen3 model family show this split:

| Model | TTFT Speedup (M5 vs M4) | Generation Speedup |

|---|---|---|

| Qwen3-1.7B (BF16) | 3.57× | 1.27× |

| Qwen3-8B (BF16) | 3.62× | 1.24× |

| Qwen3-14B (4-bit) | 4.06× | 1.19× |

| Qwen3-30B MoE (4-bit) | 3.52× | 1.25× |

Source: Apple MLX Research — Exploring LLMs with MLX and M5 GPU Neural Accelerators

Prefill on Qwen3-14B is 4× faster, a compute-bound speedup that appears to be driven directly by the new Neural Accelerators. Sustained token generation sees a more modest ~20–25% improvement, consistent with the bandwidth increase from 546 GB/s to 614 GB/s.

For ML engineers, this distinction is critical. If your workflow is TTFT-sensitive (interactive chat, code completion, agentic loops), the M5’s Neural Accelerators could deliver a transformative speedup. If you’re batch-generating long sequences, the improvement would likely be real but incremental. Independent benchmarks across a wider range of models will tell the full story.

Apple GPU ≈ NVIDIA Architecture (More Than You Think)

How Similar Are Apple GPU and NVIDIA GPU Architectures?

Apple’s GPU compute model is architecturally near-identical to NVIDIA’s: both use 32-wide SIMD/warp execution, equivalent shared memory models (32 KB threadgroup memory), and C++14-based kernel languages. The fundamental divergence is in the memory system, not the compute model.

Here’s what this equivalence looks like in practice:

The architectural divergence isn’t in the compute model. It’s in the memory system. NVIDIA uses discrete VRAM (GDDR6X or HBM) with explicit host-to-device memory management. Apple uses unified memory with a single coherent address space. The compute philosophies are nearly identical; the memory strategies are fundamentally different.

This matters for portability. Porting CUDA kernels to Metal is far less work than most assume. The execution model maps almost 1:1. The real effort is in rethinking memory management, and unified memory often simplifies that.

Dynamic Caching: A Potential Advantage NVIDIA Doesn’t Have

The M5 inherits second-generation dynamic caching from M3. The register file acts as a hardware-managed cache instead of requiring fixed allocation. On NVIDIA, high register usage per thread reduces occupancy (fewer threads can run concurrently), requiring manual __launch_bounds__ tuning. On Apple Silicon, the hardware adjusts dynamically: 1,024 threads per core regardless of register pressure. No occupancy tuning. No performance cliff from register-heavy kernels.

This is a genuine developer-experience advantage that rarely gets mentioned in hardware comparisons, and one that could matter more as Metal AI workloads grow in complexity.

The Mac AI Software Stack in 2026

MLX: The Framework That Matters Most

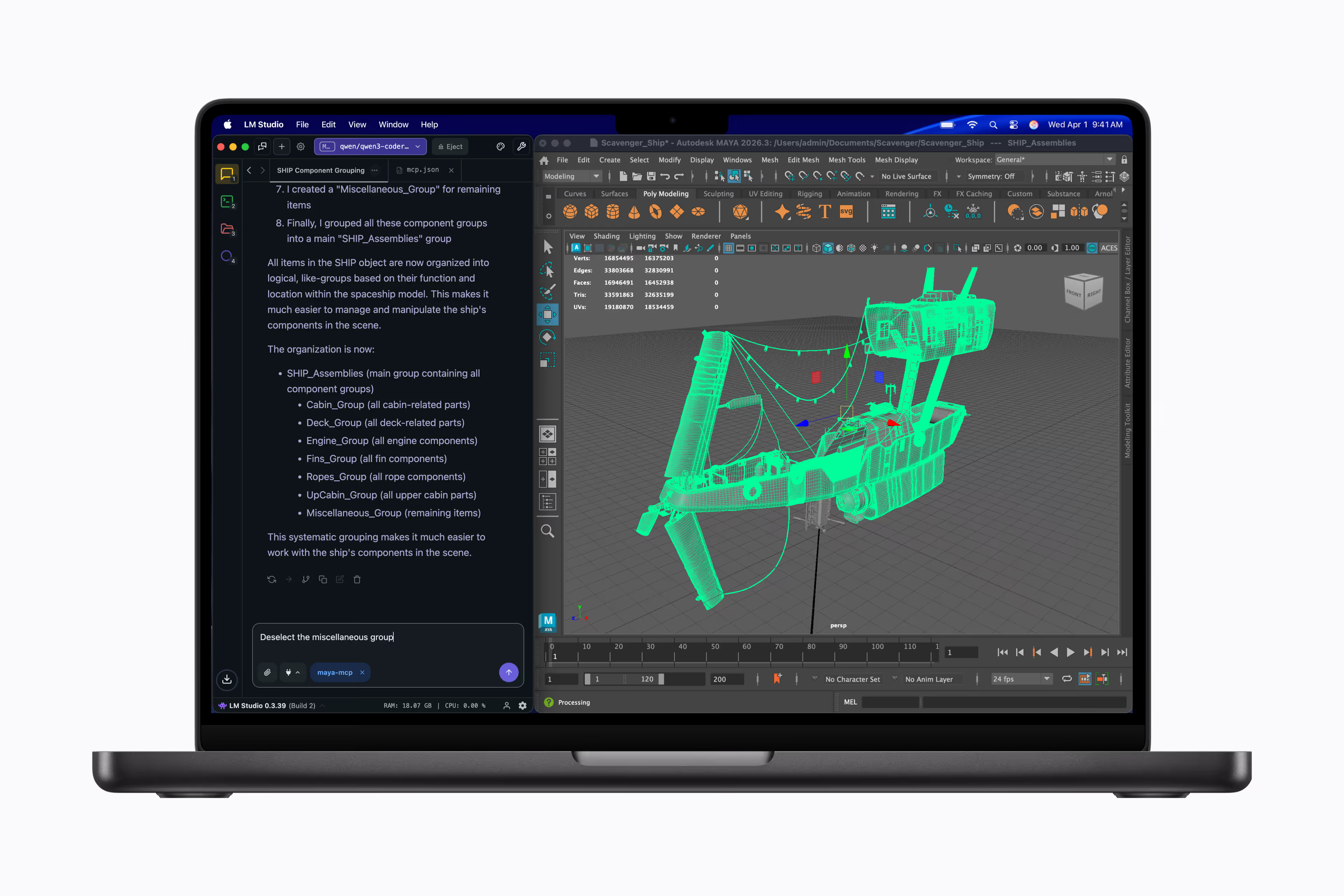

MLX is Apple’s machine learning framework, purpose-built for Apple Silicon’s unified memory architecture. It would benefit most from the M5’s Neural Accelerators because it runs directly on GPU cores where those accelerators live.

Key capabilities in 2026:

MLX is where the M5 Max should shine brightest. Apple’s own benchmarks show TTFT on Qwen3-14B at 4-bit dropping below 10 seconds, and FLUX-dev-4bit image generation running 3.8× faster on M5 versus M4. If these gains hold across third-party models and workflows, this represents a material productivity improvement for researchers and developers iterating on local models.

llama.cpp and Ollama

llama.cpp runs natively on Metal with full GPU acceleration on Apple Silicon. It’s the most widely used inference runtime for GGUF models and delivers strong token generation speeds. Ollama wraps llama.cpp with a clean API layer and one-command model management, making it the easiest on-ramp for local LLM deployment.

Both tools execute on the GPU, not the Neural Engine, because the Neural Engine cannot be programmed directly (no public ISA). The M5’s GPU Neural Accelerators should benefit these tools through Metal 4, as the dedicated matrix hardware would accelerate the matmul-heavy operations that dominate transformer inference.

vLLM with Metal Support

vLLM brings continuous batching and PagedAttention to Apple Silicon via Metal. This enables serving multiple concurrent requests efficiently, which is critical for teams running local inference servers. Apple claims batched throughput can exceed 400 tokens per second across concurrent requests on M5 Max, though single-user latency follows the bandwidth-bound generation speeds described above.

PyTorch MPS: Improving but Still Behind

PyTorch’s Metal Performance Shaders (MPS) backend has improved substantially, but it remains slower than MLX for most inference workloads on Apple Silicon. The MPS backend doesn’t yet leverage the M5’s Neural Accelerators as effectively as MLX, and operator coverage still lags behind CUDA. For training, MPS is viable for prototyping and medium-sized models, but CUDA remains the standard for production training at scale.

The Ecosystem Gap, And Why It Matters

Apple’s software ecosystem for AI is younger, smaller, and less battle-tested than NVIDIA’s. The honest picture:

These gaps are real, and they’re why NVIDIA remains the default for production AI infrastructure. Apple is positioning itself as competitive for local inference, prototyping, and single-node development, not for training GPT-5.

What We Don’t Know Yet

Apple’s marketing team reveals specs selectively. Here’s what remains unconfirmed or untested as of March 2026, and these unknowns are significant enough that they should temper any conclusions drawn from spec-sheet analysis alone:

Unconfirmed Hardware Details

Performance Unknowns

Until independent reviewers and the ML community put M5 Max through rigorous testing, the performance picture remains incomplete. The architecture looks promising, but architecture and real-world performance don’t always align.

Design Problems That Could Hold Apple Back

Hardware Constraints

1. The Neural Engine is likely a dead end for LLMs. The ANE’s convolution-native architecture was designed for CNN-class workloads in 2017. Its graph execution model, which compiles and runs entire neural network graphs atomically with no programmable ISA, makes it fundamentally unsuited for the iterative, attention-heavy computation patterns of transformer models. Apple appears to have acknowledged this by adding dedicated matmul hardware to the GPU rather than redesigning the Neural Engine. The ANE handles Apple Intelligence features and on-device models efficiently, but it’s probably not the future of on-device LLM compute.

2. LPDDR5X bandwidth ceiling. At 614 GB/s, the M5 Max is fast, but NVIDIA’s H100 delivers 3,350 GB/s via HBM3, and even the RTX 4090 hits 1,008 GB/s. The 48 MB system-level cache partially compensates, but for bandwidth-bound token generation, Apple is structurally limited by its memory technology choice. Closing this gap significantly would likely require HBM or a bandwidth breakthrough in a future generation.

3. Single-node limitation. You cannot link two M5 Max machines for distributed training the way NVIDIA systems scale via NVLink and InfiniBand. Apple Silicon is inherently single-node. For models that exceed 128 GB, there is no Apple solution today.

Software Constraints

4. No FlashAttention on Metal. FlashAttention is the single most impactful optimization for transformer inference on NVIDIA hardware. It reduces memory usage from O(n²) to O(n) for attention computation. No equivalent exists on Metal. This limits Apple’s competitiveness for long-context inference workloads where attention memory dominates.

5. CUDA ecosystem inertia. Fifteen years of CUDA optimization, specialized libraries (cuDNN, cuBLAS, TensorRT, NCCL, Triton), and institutional knowledge create massive switching costs. Even if Apple’s hardware were compute-equivalent, the software ecosystem gap would take years to close.

6. CoreML is a black box. Developers cannot see or control how CoreML routes operations between the Neural Engine, GPU, and CPU. Optimization requires trial-and-error rather than systematic profiling. NVIDIA’s toolchain (Nsight, nvprof, TensorRT) provides full visibility into the execution pipeline.

The NVIDIA DGX Spark: Apple’s New Direct Competitor

The DGX Spark landed in early 2026 at ~~$3,999~~ $4,699 (NVIDIA raised the price in February 2026 due to memory supply constraints). It’s NVIDIA’s answer to the “run AI on your desk” market that Apple Silicon pioneered.

| Spec | M5 Max (MacBook Pro) | DGX Spark |

|---|---|---|

| AI Compute | ~70 TFLOPS FP16 | 1 petaFLOP FP4 |

| Memory | 128 GB unified | 128 GB unified |

| Bandwidth | 614 GB/s | 273 GB/s |

| CPU | 18-core (6S + 12P) | 20-core ARM (Grace) |

| Form Factor | Laptop | Desktop mini-PC |

| Power Draw | ~50W | ~300W+ |

| Price | $5,549 (128 GB / 2 TB config) | $4,699 |

| Max Model Size | ~70B Q4 | Up to 200B (claimed) |

| Software Stack | MLX, llama.cpp, Metal | CUDA, TensorRT-LLM |

The bandwidth comparison is striking: Apple could deliver 2.25× the memory bandwidth of the DGX Spark. For bandwidth-bound token generation, the bottleneck for every local LLM, this would be a significant advantage if the specs hold. The Spark counters with dramatically higher raw AI compute (1 petaFLOP FP4) and the full CUDA/TensorRT ecosystem.

How these two platforms actually compare in real-world LLM workloads is one of the most interesting unanswered questions in edge AI hardware right now. We intend to find out.

For a deeper comparison of rental options for both platforms, see our AI/ML development solutions or contact our team for configuration guidance.

What This Could Mean for Pros: Potential Workflow Impact

AI/ML Researchers and Engineers

If the M5 Max delivers on its specs, it would be a legitimate local AI development workstation. Running 70B models in unified memory with 4× faster prefill. Iterating on model architectures locally before deploying to cloud GPU clusters. The 128 GB memory ceiling and strong bandwidth would make it potentially the most capable single-device platform for large-model experimentation outside of dedicated server hardware.

Local inference, model prototyping, fine-tuning experiments on models up to ~70B, RAG pipelines, agentic frameworks.

VFX Artists and Video Engineers

The 40-core GPU delivers performance reportedly on par with the NVIDIA RTX 5070 in synthetic benchmarks, in a laptop. Third-generation ray tracing is claimed to run up to 35% faster than M4 Pro (30% on M5 Max vs M4 Max). Apple reports DaVinci Resolve effects rendering is 3× faster on M5 Max versus M4 Max, and Topaz Video AI runs 3.5× faster.

The dedicated per-port Thunderbolt 5 controllers deserve attention here. Previous generations shared a single controller across ports, creating bandwidth contention when running multiple high-bandwidth peripherals. The M5 Pro/Max gives each of its three TB5 ports its own dedicated controller, so all three can run at full bandwidth simultaneously. For VFX artists daisy-chaining storage arrays, displays, and capture devices, this would eliminate a real bottleneck.

Internal SSD bandwidth doubles to 14.5 GB/s via a PCIe 5.0 NVMe controller, relevant for loading large models, ingesting 8K footage, and reading massive VFX scene files.

IT Procurement and Enterprise

The M5 Pro/Max introduces Memory Integrity Enforcement (MIE), described as an industry-first, always-on hardware memory safety feature that prevents buffer overflows and use-after-free exploits at zero performance cost. For enterprise security posture, this could be a meaningful differentiator.

The M5 Pro now supports up to 64 GB of unified memory (up from 48 GB on M4 Pro), making it viable for workloads that previously required the Max configuration. Wi-Fi 7 and Bluetooth 6 connectivity arrive via Apple’s N1 networking chip.

If you’re evaluating how renting hardware works for a team deployment, the M5 Pro’s expanded memory makes it a strong middle-ground option between base configurations and the full M5 Max.

Our Take: Apple’s Best Shot Yet, But the Jury Is Still Out

Here’s the bottom line: based on its architecture and specifications, the M5 Max looks like it could be the best single-device platform for running 70B+ parameter models locally, promising ~70 TFLOPS FP16 and 128 GB unified memory with no VRAM cliff. But “could be” and “is” are separated by independent benchmarks that haven’t landed yet. For small-model inference speed and training at scale, NVIDIA remains superior with 5–28× more raw compute and the mature CUDA ecosystem.

Where Apple could win decisively:

Where NVIDIA still leads, and likely will for some time:

The honest conclusion: Apple appears to be making its most serious architectural play yet at NVIDIA’s position in edge AI and local inference. It’s not replacing CUDA for datacenter training. But for the growing class of workloads where you need to run a 70B+ model on a single device (local development, privacy-sensitive deployment, energy-constrained environments), the M5 Max could be the best hardware available at any price. We’ll know for certain once independent testing catches up to the spec sheet.

We’re Excited to Put This to the Test

At Skorppio, we’re preparing to receive M5 Max MacBook Pro configurations into our rental inventory as soon as they’re available. We’ll be running our own benchmarks across AI/ML inference, VFX rendering, and video production workloads, and publishing the results. This is the hardware generation we’ve been most anticipating, and we want to give our customers real data, not spec-sheet extrapolation.

If you want to be among the first to test this hardware on your actual workloads, Skorppio will offer M5 Max workstation rentals alongside our existing NVIDIA RTX PRO 6000 workstations. Rent the configuration, test on your workload, then decide without the upfront capital commitment. You can use our VRAM calculator to estimate whether your model fits in the M5 Max’s 128 GB unified memory.

The AI hardware landscape just got a lot more interesting. We’ll be watching Metal 4 TensorOps adoption, third-party Neural Accelerator benchmarks, and thermal characterization as independent reviews land. Expect updates.

Frequently Asked Questions

Can the M5 Max run 70B parameter models locally?

Based on the specifications, yes. A 70-billion-parameter model quantized to 4-bit precision requires approximately 42 GB of memory. The M5 Max provides 128 GB of unified memory at 614 GB/s, all GPU-addressable, with room remaining for context windows, KV cache, and the operating system. Using MLX or llama.cpp, the M5 Max should be capable of running 70B Q4 models at an estimated 30–35 tokens per second, based on M4 Max precedent and the bandwidth increase. Independent benchmarks at this model size are still pending.

How does M5 Max AI performance compare to NVIDIA RTX 4090?

It depends on model size. For small models (7B, 13B) that fit within the RTX 4090’s 24 GB VRAM, NVIDIA is expected to remain 2–3× faster (100–150 tok/s vs est. 40–50 tok/s). For large models (70B+) that exceed NVIDIA VRAM, the M5 Max should be approximately 3× faster (~30 tok/s vs ~10 tok/s) because the entire model runs at GPU bandwidth instead of offloading to PCIe. The M4 Max already demonstrated this pattern, and the M5 Max’s additional bandwidth and Neural Accelerators should widen the gap.

What are Neural Accelerators in the M5 GPU?

Neural Accelerators are what Apple describes as dedicated matrix-multiplication hardware units embedded in every M5 GPU core. Each one is specified to perform 1,024 FP16 fused multiply-accumulate operations per cycle. The 40-core M5 Max would deliver approximately ~70 TFLOPS FP16 in aggregate, up from 18.4 TFLOPS FP32 on the M4 Max, which had no dedicated matrix hardware. They are accessed through Metal 4’s TensorOps API.

Is the M5 Max good for AI training?

The M5 Max should be suitable for prototyping, fine-tuning, and training small-to-medium models locally. PyTorch MPS and MLX both support training workflows. However, for large-scale production training, NVIDIA remains the industry standard. CUDA, NVLink for multi-GPU scaling, FlashAttention, and 15 years of optimized libraries provide capabilities Apple cannot yet match. The M5 Max is positioned for inference, not training at scale. For teams running LoRA or QLoRA fine-tuning workflows on NVIDIA hardware, the AI Fine-Tuning Kit provides a ready-to-deploy setup with 256 GB addressable memory.

Should I choose M5 Max or DGX Spark for local AI development?

The M5 Max offers 2.25× the memory bandwidth (614 GB/s vs 273 GB/s), which directly benefits the bandwidth-bound token generation phase of LLM inference. It also runs at ~50W in a laptop form factor. The DGX Spark counters with dramatically higher raw AI compute (1 petaFLOP FP4), the full CUDA/TensorRT ecosystem, and support for models up to 200B parameters. Choose M5 Max for portability, energy efficiency, and bandwidth-sensitive inference. Choose DGX Spark for maximum compute, CUDA ecosystem access, and larger model support. Real-world head-to-head comparisons on identical workloads haven’t been published yet. We plan to run those tests ourselves.

Have questions about M5 Max configurations for your AI or VFX workflow? Reach out to our team. We help engineers and creative professionals match hardware to workload, not the other way around.

Sources and Further Reading

- Apple Newsroom: M5 Pro and M5 Max Announcement

- Apple MLX Research: Exploring LLMs with MLX and M5 GPU Neural Accelerators

- Notebookcheck: M5 Max GPU Analysis — On Par with RTX 5070

- Inside the M4 Apple Neural Engine — Maderix (ANE Reverse Engineering)

- OWC: Inside the M5 Pro and M5 Max Fusion Architecture

- MacBook Pro Technical Specifications — Apple

- MLX GitHub Repository

- NVIDIA DGX SparkReading

Cloud GPU pricing looks aggressive on paper. But hourly rates hide commitment traps, counterparty risk, and debt-funded subsidies that change the math entirely. Here is what your finance team should model before signing.

.jpg)

We pit the NVIDIA DGX Spark against the Mac Studio in a "Race to 1 Million Tokens." The results prove that in high-throughput agentic workflows, the most efficient machine is not the one with the lowest idle wattage—it's the one that finishes the job first.