High-Performance Computer Rentals

Dedicated bare-metal systems configured for your workflow. Flat weekly or monthly rates. No cloud. No shared tenancy. No metering. Full root access from day one.

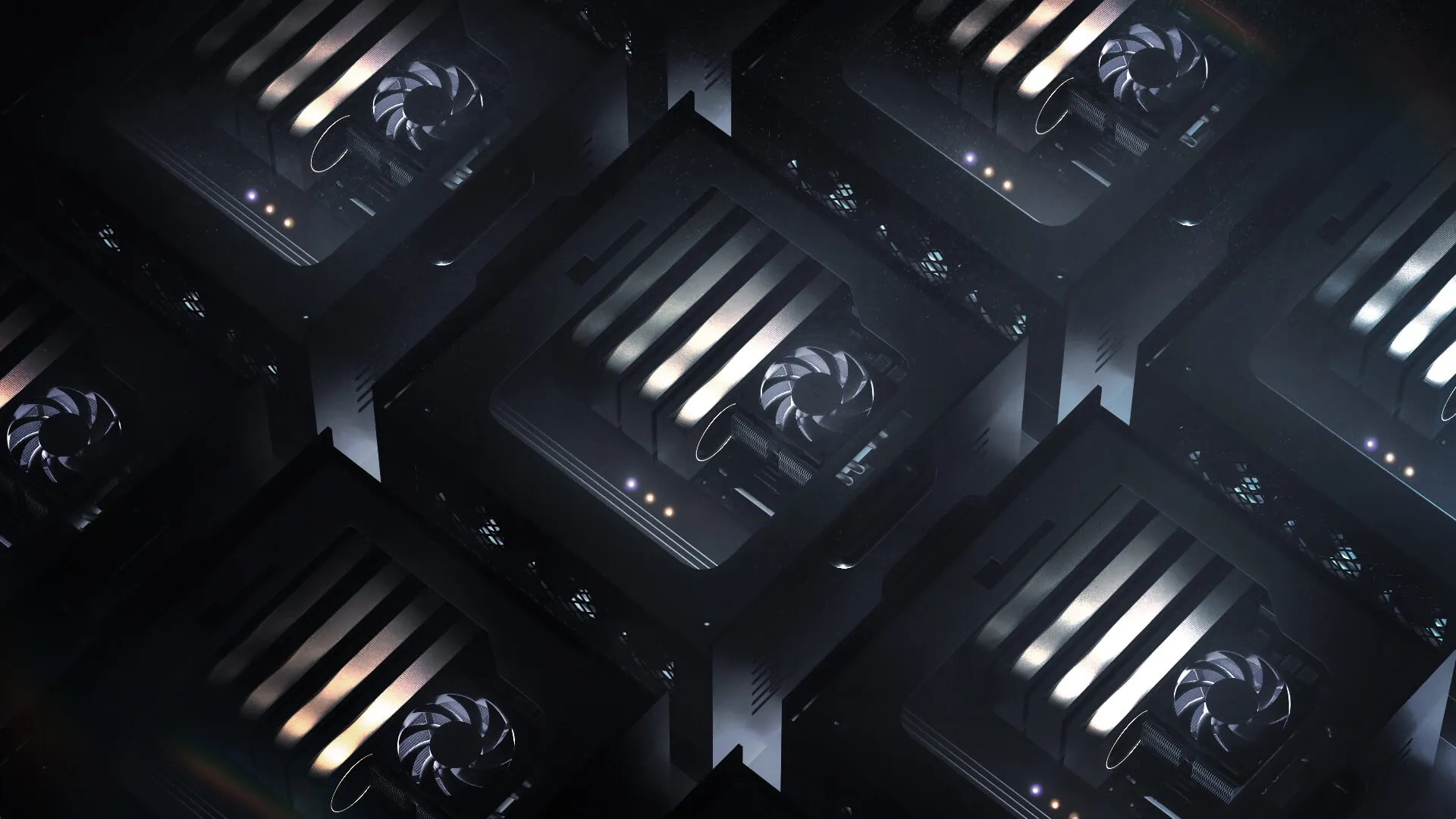

ACCESS TO ENTERPRISE HARDWARE

Skorppio's built on NVIDIA Blackwell GPU's, AMD CPU's, and enterprise memory and storage.

Hardware

Featured System

WORKSTATION

ULTRA

Purpose-built for AI fine-tuneing and rendering workflows. The Renderboxes ATOM delivers enterprise class compute in a workstation form factor — ready to deploy.

Enterprise Workflows

Deploy pre-configured systems that slot directly into your existing pipeline — no setup overhead, no compatibility surprises.

Skip six-figure purchases and 12-week lead times. Skorppio delivers pre-configured, enterprise-grade systems on flexible rental terms — shipped to your site or available in our hosted facilities.

Scale up for a project, scale down when it ends. No depreciation, no maintenance burden, fast and secure bare metal compute.

NEED A CUSTOM

CONFIGURATION?

Tell us your platform of interest from laptop to Ai server and one of our expert hardware architects will contact you.

A solutions architect will contact you via the email provided.

SOLUTIONS BY

WORKFLOW

Your hardware should exceed the demands of your workflow. Compute architecture mis-match leads to poor performance and bottlenecks. Learn more about why Skorppio only offers workflow proven compute across industries that demand performance.

AI & MACHINE LEARNING

Multi-GPU servers with up to 768 GB VRAM for LLM fine-tuning, inference at scale, and retrieval-augmented generation. ECC memory, Fast NVMe storage, all running on 120V power for use outside the data center.

VFX & VIRTUAL PRODUCTION

RTX PRO Blackwell workstations and render nodes configured for Redshift, V-Ray, Nuke, Houdini, and DaVinci Resolve. Production-verified from 4K through 16K resolution.

ARCHITECTURAL VISUALIZATION

RTX PRO Blackwell workstations for real-time ray-traced visualization in Enscape, Twinmotion, V-Ray, and Lumion. Handle large-scale BIM models and photorealistic client presentations at 4K and above.

SCIENTIFIC RESEARCH

On-premise compute for labs and research institutions running CUDA-accelerated workloads — molecular dynamics, climate modeling, computational fluid dynamics, and genomics pipelines.

LIVE EVENTS

Portable and rack-mounted GPU systems for Notch, Disguise, TouchDesigner, and Unreal Engine in live production environments. Configured for real-time graphics and media playback at broadcast quality.

Questions? Answers.

Frequently Asked Questions

What does Skorppio rent?

NVIDIA RTX PRO Blackwell GPU workstations, EPYC rackmount servers, multi-node clusters, and high-performance laptops. Workstations support up to 4 GPUs with 384 GB aggregate VRAM. Servers scale to 8 GPUs with 768 GB. Every system is physical hardware shipped to your location, not a cloud instance.

How fast do systems ship?

48 hours for standard configurations. Some ship next-day. Create an account to check real-time availability for specific hardware.

What are the rental terms?

Weekly minimum, no long-term contracts. Monthly terms available at lower rates. Pricing varies by configuration and is visible after account creation.

How is this different from cloud GPU providers?

Cloud sells virtualized, per-hour access to shared hardware in someone else's datacenter. Skorppio ships dedicated bare metal to your location. Flat weekly or monthly pricing, full root access, no shared tenancy, and your data never leaves your network.

What do teams use Skorppio for?

AI/ML teams run fine-tuning, inference, and RAG pipelines. VFX studios rent for rendering, compositing, and simulation. Engineering teams use our systems for CAD, computational workloads, and prototyping. If the job needs dedicated GPU compute without cloud constraints or purchase lead times, it fits.

The M5 Max promises ~70 TFLOPS FP16 through dedicated Neural Accelerators and 128 GB unified memory at 614 GB/s. We analyze the architecture, benchmark Apple's claims, and compare head-to-head with NVIDIA for AI inference.

.webp)

Hundreds of RTX 5090 vs RTX PRO 6000 comparisons already exist, yet most rely on short benchmarks that ignore real production behavior. This article explains why sustained workloads, memory integrity, and long-term stability matter more than peak scores.