ECC vs Non-ECC Memory and Silent Render Failures

ECC vs non-ECC memory is not a benchmark debate. It is a correctness-over-time problem that only becomes visible once rendering runs at scale.

.webp)

ECC vs Non-ECC Memory and Silent Render Failures

Most studios never see this problem. They feel it. Renders corrupt 40 hours in. Simulations produce different results on identical hardware. A training checkpoint loads with unexpected accuracy drift, and nobody can explain why. The culprit is often invisible: a single bit has flipped in system memory, and the hardware had no mechanism to detect or correct it.

This is the ECC vs non-ECC memory problem. It determines whether your system silently accumulates errors or catches them before they reach your work.

How Memory Errors Actually Happen

A bit flip occurs when a single binary digit in a memory cell changes state without being written, from a 0 to a 1 or vice versa. At the hardware level, DRAM stores each bit as an electrical charge in a microscopic capacitor. These charges are not perfectly stable. They leak over time, and external interference can corrupt them.

What causes bit flips

Cosmic radiation and background particles. High-energy particles from cosmic rays or natural radioactive decay can strike a memory cell and change its charge state. This is not theoretical. Research at scale, including Google's fleet-wide study of their datacenter memory, has measured approximately one correctable single-bit error per gigabyte of RAM per year. For a workstation with 256 GB of memory running 24/7, that translates to roughly one detectable error every 1.4 days on average.

Electrical noise and thermal stress. High temperatures increase charge leakage rates. Voltage fluctuations during sustained load can push marginally stable cells past their threshold. Memory running near its rated limits under continuous workstation loads faces higher error probability than memory idling in a desktop.

Manufacturing variation. Not all memory cells are identical. Process variation during fabrication means some cells have thinner margins than others. These weaker cells are more susceptible to interference and more likely to flip under stress. The problem scales with density. As DRAM manufacturers pack more cells into less space, the charge stored per cell decreases, and the margin for error shrinks.

ECC vs Non-ECC: What the Hardware Does Differently

Non-ECC (Standard DRAM)

Non-ECC memory stores 64 bits of data per memory word with no additional error-detection overhead. If a bit flips, the system has no mechanism to detect it. The corrupted data is used as-is by whatever process reads it next. The application, operating system, and user have no indication that anything went wrong. This is why these errors are called "silent." They don't produce error messages, crash logs, or warnings. The data is simply wrong.

ECC (Error-Correcting Code) Memory

ECC memory adds 8 extra bits per 64-bit word, for a total of 72 bits. These additional bits store a mathematical checksum (typically using a SECDED Hamming code) that allows the memory controller to detect and correct single-bit errors in real-time, with no performance penalty to the running workload, and detect (but not correct) double-bit errors.

When the memory controller reads a word, it recalculates the checksum and compares it to the stored value. If a single bit has flipped, the controller identifies the exact position and corrects it before passing the data to the CPU. The application never sees the error. If two bits have flipped in the same word, the controller detects the inconsistency and signals an uncorrectable error, typically halting the process or the system to prevent data corruption.

This correction happens on every memory read, continuously, for every byte of data in the system. It is not a periodic scan. It is an inline hardware function built into the memory controller.

Real-World Impact: Where Silent Errors Hit Hardest

Long-duration rendering

A 40-hour VFX render reads and writes memory billions of times. Each read is an opportunity for a bit flip to go undetected. If a corrupted value lands in a pixel color, the result is a subtle artifact that may not be visible until final review, if it is caught at all. If it lands in a geometry transform or shader parameter, the corruption can cascade through dependent calculations.

Studios running long renders on non-ECC memory are statistically likely to encounter this over time. The question is not whether it happens, but whether it is detected.

AI training and fine-tuning

Neural network training accumulates weight updates over millions of gradient steps. A single corrupted gradient value, caused by a bit flip in the memory holding that tensor, produces a slightly wrong weight update. One corrupted update is negligible. But training runs thousands of steps, and each step depends on the results of the previous one. Over a multi-day training run, even rare memory errors can compound into measurable accuracy degradation.

This is particularly insidious because the training loss may still converge. The model appears to train successfully, but its final accuracy is lower than expected, and the cause is virtually impossible to diagnose after the fact without ECC logging.

Scientific computing and simulation

Finite element analysis, molecular dynamics, and fluid simulation all rely on iterative numerical solvers where each timestep depends on the previous result. A bit flip in a floating-point value used in an early timestep propagates through every subsequent calculation. The simulation may complete without crashing, but the results diverge from the correct solution in ways that are difficult to distinguish from legitimate numerical behavior.

Financial and compliance workloads

For any workload where data integrity has regulatory or legal implications, silent memory corruption is an unacceptable risk. ECC memory is a baseline requirement, not an option, in environments subject to audit or data-integrity standards.

Why Most "Pro" Workstations Don't Have ECC

The majority of workstations sold as "professional" use non-ECC memory. This is not a technical limitation. It is an economic one driven by platform choice.

Consumer platforms lock out ECC

Intel's consumer platforms (Core i7, Core i9, Z-series motherboards) do not support ECC memory. AMD Ryzen technically supports ECC at the CPU level, but motherboard manufacturers rarely validate or guarantee ECC functionality on consumer boards. The result is that workstations built on consumer platforms, which includes the vast majority of "pro" systems sold by system integrators, cannot run ECC memory at all.

Professional platforms support ECC natively

ECC support requires professional-grade platforms. AMD Threadripper Pro and EPYC support registered ECC (RDIMM) natively. Intel Xeon W and Xeon Scalable do the same. These platforms also provide the expanded PCIe lane counts, multi-channel memory controllers, and firmware stability required for sustained production workloads.

The premium for ECC memory modules over equivalent non-ECC modules is typically 10-20%. The premium for professional platforms (Threadripper Pro vs Ryzen, Xeon vs Core) is more significant, driven by the motherboard, chipset, and validation requirements. But for workloads where data integrity matters, the cost of a single undetected error, in wasted render time, corrupted training runs, or flawed simulation results, typically exceeds the platform cost difference many times over.

GPU Memory: VRAM ECC and Why It Matters

The ECC discussion extends beyond system RAM. GPU VRAM is equally susceptible to bit flips, and for GPU-accelerated workloads, VRAM errors can be even more impactful because GPUs process data at higher throughput rates.

Consumer GPUs (GeForce RTX series)

The RTX 5090 and other GeForce cards do not enable ECC on their VRAM by default. Some NVIDIA consumer GPUs have the hardware capability for a limited form of ECC but do not activate it in consumer drivers. When VRAM ECC is not active, bit flips in GPU memory during rendering, training, or inference go undetected.

Professional GPUs (RTX PRO / Quadro / Datacenter)

The RTX PRO 6000, A6000, and datacenter GPUs (A100, H100, H200) feature full hardware ECC on VRAM, enabled by default. This provides continuous error detection and correction on all GPU memory operations. For a detailed comparison of these GPU architectures, see our RTX 5090 vs RTX PRO 6000 Blackwell analysis.

Why GPU ECC matters for production

A GPU processing a Redshift render reads and writes VRAM billions of times per frame. A GPU running transformer inference processes every tensor through VRAM on every forward pass. In both cases, undetected VRAM bit flips produce the same class of silent corruption as system RAM errors: wrong pixels, wrong gradients, wrong results, with no indication that anything failed.

How to Tell If Your Current System Has ECC

System RAM

Windows: Open Task Manager → Performance → Memory. If the form factor shows "DIMM" and the type shows "DDR5" or "DDR4" with no ECC indication, run wmic memorychip get DataWidth, TotalWidth in Command Prompt. If DataWidth equals TotalWidth (e.g., both 64), ECC is not present. If TotalWidth is 72 and DataWidth is 64, ECC is active.

Linux: Run dmidecode -t memory and check the "Error Correction Type" field. It should read "Multi-bit ECC" for a properly configured ECC system.

GPU VRAM

Run nvidia-smi -q -d ECC to check ECC status on NVIDIA GPUs. If the output shows "ECC Mode: Enabled" and "ECC Errors: 0," VRAM ECC is active and no errors have been detected since the last reset. If ECC Mode shows "Disabled" or the section is absent, VRAM ECC is not available or not enabled.

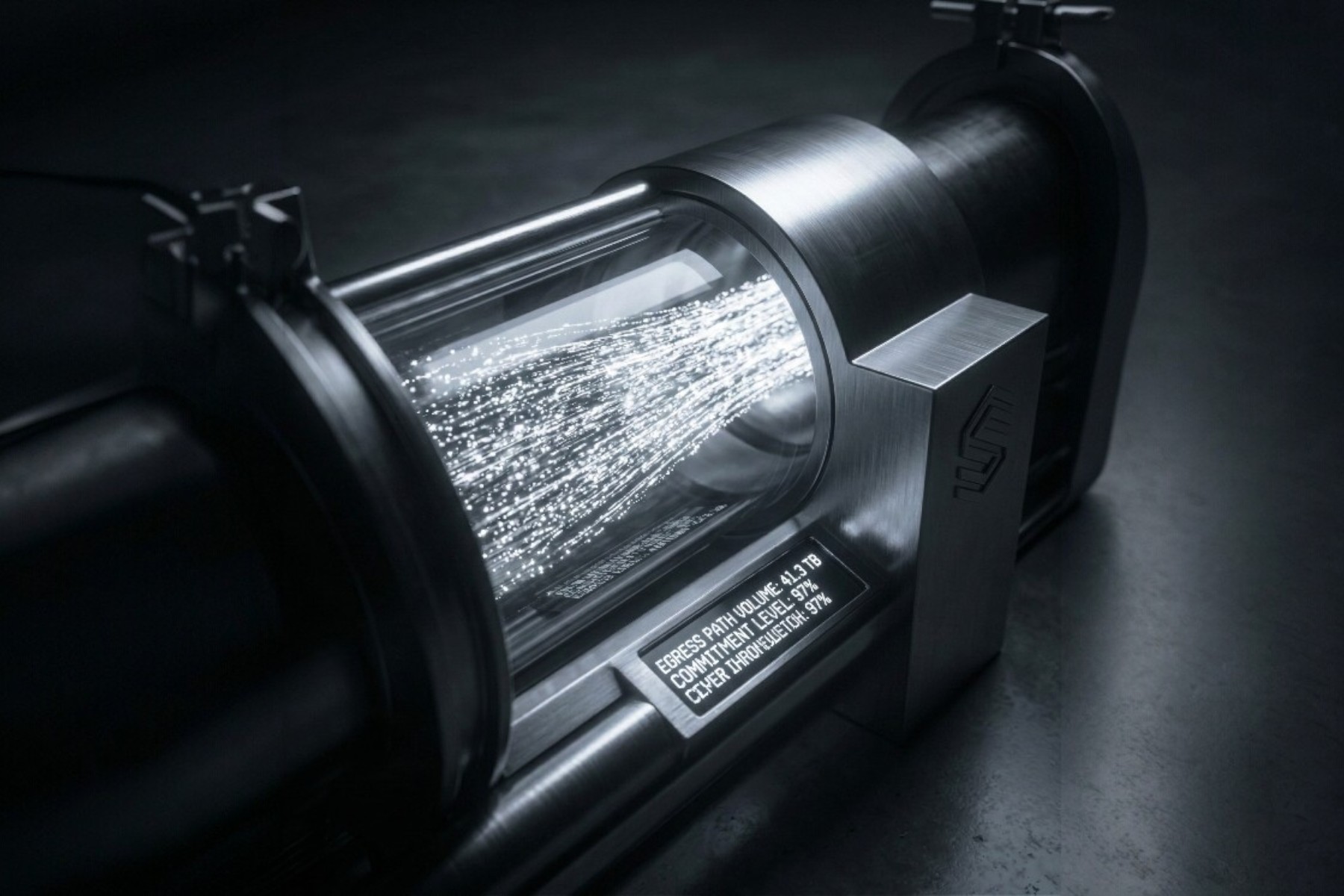

Skorppio's ECC Implementation

Every Skorppio workstation and server ships with ECC memory as a non-negotiable baseline. This applies to both system RAM and GPU VRAM across our entire product line.

System memory. All Skorppio systems use Registered ECC (RDIMM) modules on professional platforms (AMD Threadripper Pro, EPYC, or Intel Xeon). Memory is validated at the component level and stress-tested under sustained load before deployment.

GPU memory. All Skorppio multi-GPU workstations deploy NVIDIA RTX PRO 6000 GPUs with full hardware ECC enabled on VRAM. Our single-GPU desktop systems use GeForce RTX 5090 cards where the workload profile (shorter sessions, interactive use) carries lower cumulative error risk. For sustained rendering and AI workloads, we recommend configurations with ECC-enabled professional GPUs.

Why this matters for rental customers. When you rent a Skorppio workstation for a multi-day render or a training run, ECC is already configured and active. You don't need to verify it, enable it, or pay extra for it. Your Rack Pro Workstation, Ultra GPU Workstation, or Scientific Compute Kit arrives with error correction running on every byte of memory in the system.

Recommendations

When non-ECC is acceptable

Interactive work sessions under 8 hours. Tasks where output is visually reviewed and easily re-run. Development and testing environments where errors would be caught during validation. Workloads on consumer GPUs where the cost-performance tradeoff is appropriate for the risk level.

When ECC is essential

Renders exceeding 12 hours. AI training runs of any significant duration. Scientific simulations where result reproducibility matters. Any workload where a silent error would not be detected before delivery or publication. Multi-day unattended batch processing.

The boundary is not about the application. It is about the duration and the consequence of an undetected error. Short interactive sessions are forgiving. Long unattended runs are not.

For teams running sustained production workloads, renting ECC-equipped hardware eliminates this risk entirely. See our rental process walkthrough for details on how we deploy production-grade systems with ECC active from day one, or explore the full product catalog to compare configurations.

Sources and Further Reading

Google Research – DRAM Errors in the Wild: A Large-Scale Field Study

IBM – ECC and Chipkill Memory Protection

The M5 Max promises ~70 TFLOPS FP16 through dedicated Neural Accelerators and 128 GB unified memory at 614 GB/s. We analyze the architecture, benchmark Apple's claims, and compare head-to-head with NVIDIA for AI inference.

Cloud GPU pricing looks aggressive on paper. But hourly rates hide commitment traps, counterparty risk, and debt-funded subsidies that change the math entirely. Here is what your finance team should model before signing.