Computer Rental in Los Angeles

NVIDIA GPU Workstations and Servers. Next Business Day Delivery Across LA.

Bare metal NVIDIA GPU hardware delivered to your LA production studio, or office. Flat weekly or monthly rates. Pre-configured and deployment-ready.

Pricing you can see.Hardware you can book.

Skorppio delivers dedicated, bare-metal workstations and servers to productions across Los Angeles. No cloud. No virtualization. No shared resources. Your hardware, pre-configured and deployed on your timeline.

01 / BARE METAL

Dedicated physical hardware. No VMs, no shared GPUs, no noisy neighbors.

02 / FLEXIBLE TERMS

Weekly or monthly. Scale up, scale down, or return — no long-term lock-in.

03 / DEPLOYMENT READY

Pre-configured to your specs. Plug in and start working — no setup overhead.

04 / DIRECT SUPPORT

One point of contact. No ticket queues, no chatbots, no layers.

Same-week deployment across Greater Los Angeles

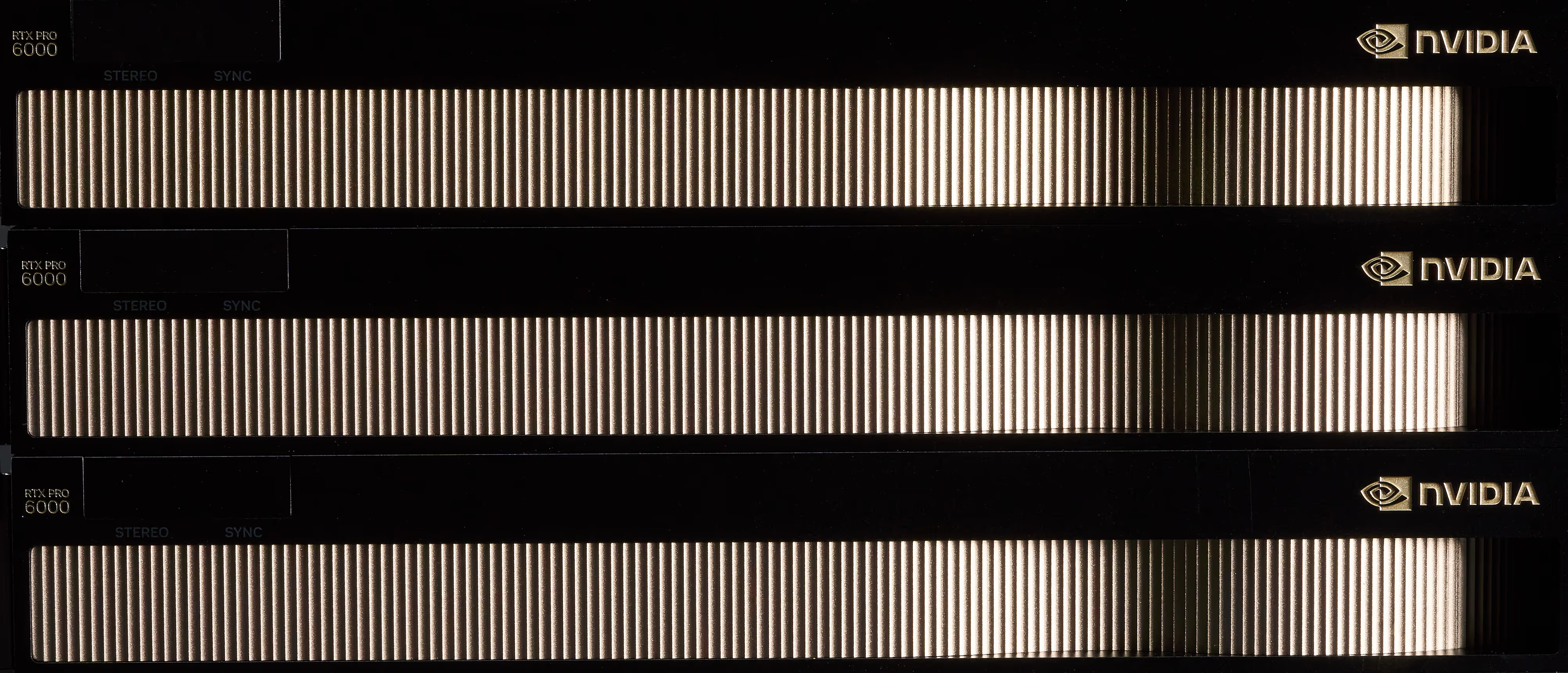

BLACKWELL GENERATION GPUs

Built to make pixels

LOS ANGELES RUNS ON COMPUTE

Why Los Angeles Teams Rent High-Performance Computers

Los Angeles runs on compute. VFX studios in Burbank pull multi-day renders. AI startups in Playa Vista train models on multi-GPU clusters. Post-production houses across Hollywood need machines that match the pace of delivery schedules — not procurement cycles. Built by engineers with over twenty years in LA production — headquartered on Hollywood's historic Eastman Kodak Lot.

A $150,000 GPU server depreciates the moment it ships. NVIDIA releases a new architecture every 12–18 months, and the resale market punishes late sellers. Meanwhile, project timelines shift. A six-month VFX contract becomes nine. A proof-of-concept wraps in three weeks instead of three months. Renting decouples your compute capacity from your capital budget. Current-generation NVIDIA GPUs — RTX PRO 6000, H100, A100 — on terms that match your project, not a depreciation schedule. When the project ends, the hardware goes back. No disposal. No write-downs. No idle assets.

Rental Hardware Categories

MULTI-GPU Workstations

Single to quad-GPU configurations with NVIDIA RTX PRO 6000 and L40S. Built for ML training, VFX rendering, and GPU-accelerated simulation.

EXPLORE WORKSTATIONS →

Rackmount Servers

NVIDIA H100, A100, and L40S in 1U–4U form factors. Deploy on-prem compute for distributed training, inference, or render farm overflow.

EXPLORE SERVERS →

DESKtOP COMPUTERS

NVIDIA RTX mobile GPUs with workstation-grade reliability. For VFX supervision on set, location shoots, and field engineering across LA stages.

EXPLORE MOBILE →VIEW OUR FULL CATALOG

Explore MORE Hardware

Laptops,Desktops, kits, and more — browse our full rental catalog by category.

HOW RENTING WORKS

01

Tell Us Your Workload

Describe your project — AI training, VFX rendering, simulation, post-production. We match GPU, CPU, memory, and storage to the job.

02

Get Real-Time Pricing & INVENTORY

Self-service quotes with flat weekly or monthly rates. See the price before you commit. No hidden fees.

03

Hardware Ships Configured

Pre-built, stress-tested, deployment-ready. OS installed, drivers loaded. Next business day delivery across Greater LA, subject to availability.

04

Plug In and Start

Direct support from quote to return. Scale up mid-project or return when done. No long-term contracts.

Ready to start?

GET A QUOTE →FAST LOCAL Delivery Across Los Angeles

your hardware

CONFIGURED.

TO YOUR LOCATION

SHIPPED.

ON YOUR TERMS

DELIVERED.

Next business day delivery across greater Los Angeles — Hollywood, Burbank, Playa Vista, DTLA, Culver City, Santa Monica, Pasadena, and Long Beach. Direct to your studio, stage, or office.

Shipping from outside LA?

We deliver pre-configured hardware nationwide via FedEx Priority. Most orders land in 2-3 business days.

Questions? Answers.

Frequently Asked Questions

How much VRAM do I need to fine-tune a large language model?

The amount of VRAM you need depends on the model size, precision format, and fine-tuning method. Full fine-tuning of a 70B-parameter model in FP16 can require 140 GB or more of VRAM, while techniques like LoRA and QLoRA significantly reduce that footprint — sometimes to under 48 GB.

For multi-GPU setups, frameworks like DeepSpeed ZeRO and FSDP allow you to shard model states across GPUs, distributing memory requirements efficiently.

Skorppio workstations come equipped with up to 384 GB of VRAM (4× A6000) or 768 GB (8× A6000) in server configurations, giving you room to fine-tune the largest open models without compromise.

How quickly can I get hardware?

Most Skorppio systems ship within 48 hours of order confirmation, and some configurations are available for next-day delivery depending on your location and inventory. We maintain ready-to-ship inventory specifically for AI and ML workloads so you’re not waiting weeks for provisioning.

Can I run PyTorch, Hugging Face, and CUDA without modification?

Yes. Every Skorppio workstation and server ships with the full CUDA toolkit, compatible NVIDIA drivers, and a clean Ubuntu environment ready for your stack. PyTorch, Hugging Face Transformers, JAX, TensorFlow — all run natively. You get full root access, so you can install and configure anything you need without restrictions.

What is the minimum rental period?

Our minimum rental period is one week. Monthly rentals are available at a reduced rate, and we offer flexible terms for longer engagements. Whether you need a system for a sprint, a quarter, or an ongoing project, we’ll match the term to your timeline.

How does multi-GPU distributed fine-tuning work on PCIe?

Skorppio’s multi-GPU workstations use PCIe 5.0 x16 lanes, delivering up to 128 GB/s of bidirectional bandwidth per GPU. For distributed fine-tuning, frameworks like PyTorch DDP, FSDP, and DeepSpeed handle gradient synchronization and model sharding efficiently over PCIe — no NVLink required for most workloads.

PCIe-based systems are ideal for data-parallel training, LoRA/QLoRA fine-tuning, and inference pipelines where each GPU processes independently or shares lightweight updates. You get the multi-GPU benefit without the premium of NVLink for workloads that don’t require ultra-high inter-GPU bandwidth.