ACCESS TO ENTERPRISE HARDWARE

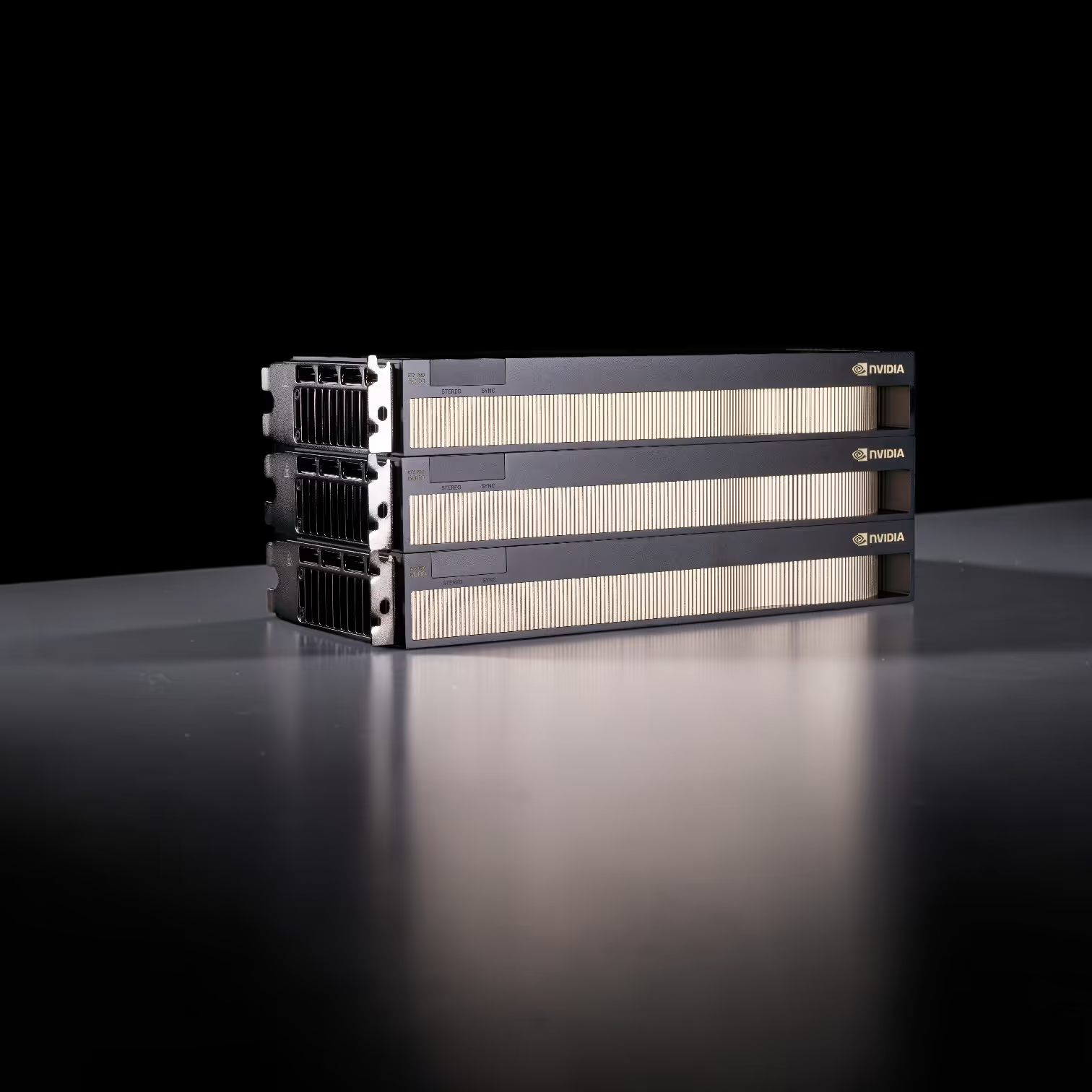

Skorppio's built on NVIDIA Blackwell GPU's, AMD CPU's, and enterprise memory and storage.

Hardware

FEATURED

SYSTEMS

DUAL EPYC 8x RTX PRO 6000 SERVER

MULTI GPU THREADRIPPER PRO

RTX PRO 6000 ULTRA WORKSTATION

NVIDIA DGX SPARK

FOUNDERS EDITION

SOLUTIONS BY

INDUSTRY

Every industry has different compute demands. Select your sector to see how Skorppio systems are configured for your specific workflows and compliance requirements.

AI & MACHINE LEARNING

Multi-GPU servers with up to 768 GB VRAM for LLM fine-tuning, inference at scale, and retrieval-augmented generation. ECC memory and NVLink interconnect for memory-bound training workloads.

VFX & VIRTUAL PRODUCTION

RTX PRO Blackwell workstations and render nodes configured for Redshift, V-Ray, Nuke, Houdini, and DaVinci Resolve. Production-ready from 4K through 16K resolution.

ARCHITECTURAL VISUALIZATION

RTX PRO Blackwell workstations for real-time ray-traced visualization in Enscape, Twinmotion, V-Ray, and Lumion. Handle large-scale BIM models and photorealistic client presentations at 4K and above.

SCIENTIFIC RESEARCH

On-premise compute for labs and research institutions running CUDA-accelerated workloads — molecular dynamics, climate modeling, computational fluid dynamics, and genomics pipelines.

LIVE EVENTS

Portable and rack-mounted GPU systems for Notch, Disguise, TouchDesigner, and Unreal Engine in live production environments. Configured for real-time graphics and media playback at broadcast quality.

Hardware economics, GPU benchmarks, and deployment strategy — from the team that builds the systems.

Cloud Scale Is Unmatched. Here's Why On-Premise Still Matters.

THE SKORPPIO BRIEF

RTX 5090 vs RTX PRO 6000 Blackwell: What Actually Matters for Production

WHY COMPANIES RENT WITH SKORPPIO

DEPLOYMENT SCENARIOS

NEED A CUSTOM

CONFIGURATION?

Tell us your workflow. We will provide in depth technical inisght and the right system architecture. Our team is ready to help.

Questions? Answers.

Frequently Asked Questions

Is on-prem GPU rental cheaper than cloud computing?

For sustained workloads running four weeks or longer, on-prem rental typically costs 40 to 60 percent less than equivalent cloud GPU instances. Cloud billing compounds quickly — hourly instance fees plus egress charges on every data transfer, storage surcharges, and premium pricing for reserved capacity. A single A100 cloud instance can exceed $25,000 per month at sustained usage before egress and storage fees. On-prem rental gives you a flat weekly or monthly rate with no hidden surcharges. The rental price is the total price.

When should I use cloud GPUs instead of on-prem rental?

Cloud GPU is the right choice when you need massive elastic scale for short bursts. If your workload requires 500 GPUs for six hours, cloud delivers that flexibility better than any on-prem option. Cloud also makes sense for prototyping and experimentation where you need quick access to different GPU architectures without commitment, or for geographically distributed teams that need compute in multiple regions simultaneously. The crossover point is duration and predictability — once a workload runs steadily for weeks or months, on-prem rental almost always wins on cost and performance.

What workloads perform better on dedicated on-prem hardware than cloud?

Workloads that benefit most from on-prem rental share common traits: they run for weeks or months rather than hours, they move large datasets that would trigger cloud egress fees, they require deterministic latency that shared cloud tenancy cannot guarantee, or they fall under compliance frameworks like ITAR, HIPAA, or CMMC that mandate physical data control. Specific examples include sustained AI model training and fine-tuning, VFX rendering pipelines, real-time inference serving, large-scale simulation, and any workflow where GPU utilization stays above 50 percent for extended periods.

How does on-prem rental handle data sovereignty and compliance requirements?

On-prem rental hardware sits in your facility, on your network, behind your firewall. Your data never transits a third-party provider's infrastructure. This is a hard requirement for organizations operating under ITAR, HIPAA, CMMC, or internal data governance policies that prohibit shared cloud tenancy. Cloud providers offer compliance certifications, but the data still moves through shared infrastructure and provider-controlled networks. For air-gapped environments or workloads involving controlled unclassified information, on-prem rental is often the only deployment model that satisfies both the technical and regulatory requirements.

What is cloud repatriation and why are teams moving GPU workloads off cloud?

Cloud repatriation is the trend of organizations moving workloads from public cloud back to on-premise infrastructure. For GPU-intensive work, the drivers are consistent: unpredictable costs from egress fees and hourly billing, GPU scarcity on hyperscalers making H100 and A100 availability unreliable, performance variability from shared tenancy and noisy neighbors, and data sovereignty mandates that shared infrastructure cannot satisfy. Teams are not returning to traditional hardware ownership. They are choosing on-prem rental as a third option that delivers dedicated bare-metal performance and full data control without the capital burden of purchasing.

.jpg)

We pit the NVIDIA DGX Spark against the Mac Studio in a "Race to 1 Million Tokens." The results prove that in high-throughput agentic workflows, the most efficient machine is not the one with the lowest idle wattage—it's the one that finishes the job first.

.webp)

Renting a high-performance workstation is unfamiliar territory for many teams, especially under tight deadlines. This article explains exactly how the Skorppio rental process works so there are no surprises.